Hierarchical caching

One of the cool new features in the new 2008.1 release of the eZ Components library is hierarchical caching.

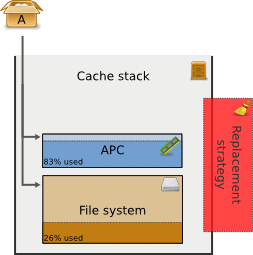

Until now, we supported several types of cache storages. Some of these utilize the file system to store data, others can use APC or Memcache. While file based caches are usually large, since disk space is getting cheaper and cheaper, they are also much slower than memory based caches. RAM caches in contrast are blazingly fast, but memory is still much more limited than disk space. Therefore it is sensible, that the most important data for a website is stored in memory while less important stuff gets cached on the disk.

The new ezcCacheStack class in the eZ Cache component provides an automatic way of realizing this. You simply stack together an arbitrary number of storages. The stack will store every item into all of the stacked caches. You can configure how many items may reside in a storage. A replacement strategy class takes care about purging a certain number items in case a storage runs full. On restore, the stack will fetch the desired item from the topmost cache it is still stored in.

Replacement strategies shipped with the eZ Cache component provide you with 2 well-known cache algorithms: Least Recently Used (LRU) and Least Frequently Used (LFU). The first one keeps track on when a cache item was last used and discards items that have not been used for the longest time, in case a storage runs full. LFU, in contrast to that, purges items that have been used least frequently. If none of these strategies fits your needs, you can always implement your own strategy, quite easily.

Using the cache stack with an appropriate replacement strategy allows you to simply ignore which items are stored where and simply use the stack as your only cache storage.

There are lots of other cool things in the 2008.1 package, which was released last Monday. We have 3 new components: Document, to parse and render different document formats, Feed, for creation and aggregation of XML feeds, and Search, which is a search engine abstraction layer, modelled after the PersistentObject component. Beside that, some other components got major new features. Feedback as usually highly appreciated. Enjoy!

Comments